Context Becomes the Agent Platform

Executive Summary

The strongest AI discourse signal over the last 24 hours is a convergence around agent infrastructure: the defensible layer is shifting from generic model access toward context systems, observable execution, workflow ownership, and cheaper long-context operation. The practical question is no longer simply whether agents can act; it is whether their memory, decisions, provenance, and operating costs can be made legible enough for real work.

Notable Signals

Stephen Chin’s AI Engineer talk, “Connecting the Dots with Context Graphs,” makes the clearest technical version of the argument. Chin frames “context graphs” as a memory substrate for agentic systems: not just vector search over text chunks, but a combined layer of knowledge graphs, embeddings, graph algorithms, and reasoning traces. The key claim is that agents need to recover relationships and provenance that similarity search often drops: policy context, prior decisions, business entities, domain constraints, and the chain of reasoning behind an answer. In his framing, the value of graph-based agent memory is not abstract elegance; it is repeatability, compliance, debugging, and human review. A conventional audit log may show what happened, but a context graph can preserve more of the “why.” That is a notable corrective to autonomy-first agent rhetoric: the strongest near-term agent story is about inspectable assistance, not invisible delegation. Source: https://www.youtube.com/watch?v=eW_vxrjvERk

The Cognitive Revolution’s Andrew Lee interview extends the same theme into product strategy. The episode presents Tasklet’s agent stack as built around file-system context, agentic search, and summarization, then connects those choices to a broader question: which kinds of software survive when model providers keep moving up the stack? This is marked as delayed discovery after the prior report cutoff, but it belongs in today’s synthesis because it directly reinforces the dominant thread. The durable software layer is being argued as context ownership plus workflow integration, not a thin wrapper around model calls. If a product owns the work artifacts, understands how they relate, and can help agents search and summarize them across an actual operating environment, it has a stronger claim than a feature that can be absorbed into a frontier model UI. Source: https://www.cognitiverevolution.ai/three-kinds-of-software-survive-tasklet-s-andrew-lee-on-competing-to-be-a-horizontal-platform/

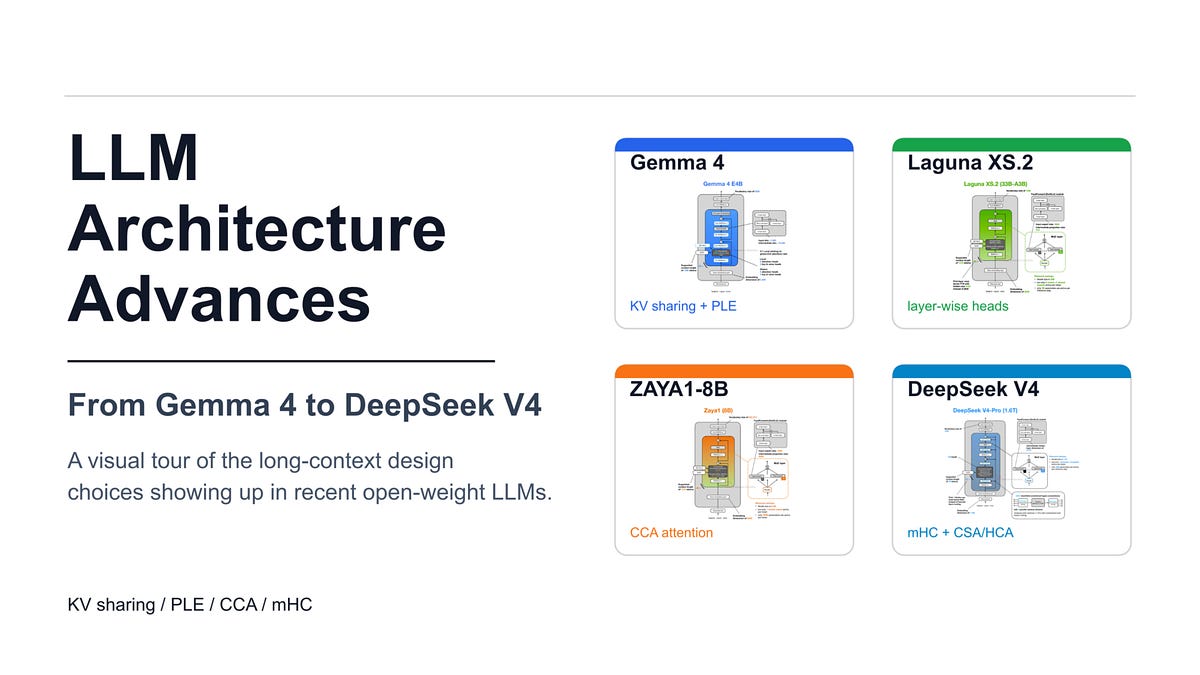

Sebastian Raschka’s architecture survey adds an infrastructure-level counterpart. His discussion of KV sharing, mHC, and compressed attention centers the economics of long-context inference rather than leaderboard-style capability claims. That matters because agent products increasingly assume persistent histories, larger working sets, and retrieval over more user or organizational context. If context is becoming the product layer, then memory bandwidth, cache reuse, and context compression become product constraints. The discourse around long context is therefore maturing: the issue is not only maximum window size, but whether long-running systems can afford to carry enough state to remain useful. Source: https://magazine.sebastianraschka.com/p/recent-developments-in-llm-architectures

Discourse Tension

These items point to a useful split in agent discourse. One side still markets agents as autonomous workers that will simply replace tasks end-to-end. The stronger practitioner signal is more conservative and more actionable: agents become valuable when the surrounding system turns context into an operating asset. That means explicit memory structures, provenance, permission boundaries, evals, observability, and cost controls.

The tension is especially visible in the move from “RAG” to richer context systems. Plain retrieval can answer questions from nearby text. Context graphs and file-system-aware agents are trying to model work: entities, relationships, decisions, policies, artifacts, and histories. This changes the evaluation question. Instead of asking only “did the model produce a plausible answer?”, teams need to ask whether the agent used the right context, preserved the right trace, exposed uncertainty, and left a reviewable path for the human or organization that depends on it.

Workflow Implications

For builders, today’s signal suggests three priorities. First, treat context architecture as a first-class product decision, not a backend detail. Where context lives, how it is searched, and how it is summarized may determine whether an agent is useful or replaceable. Second, design for inspection from the start. Reasoning traces, provenance, and relationship-aware memory are not just compliance add-ons; they are debugging tools and trust surfaces. Third, watch long-context economics closely. Bigger working memory only matters if it can be served cheaply and reliably enough to support real workflows.

The practical takeaway: the agent layer that looks most durable is not “more autonomy” by itself. It is context that can be searched, explained, audited, and operated at scale.